I’ve been a trader and investor for 44 years. I left Wall Street long ago—-once I understood that their obsolete advice is designed to profit them, not you.

Today, my firm manages around $4 billion in ETFs, and I don’t answer to anybody. I tell the truth because trying to fool investors doesn’t help them, or me.

In Daily H.E.A.T. , I show you how to Hedge against disaster, find your Edge, exploit Asymmetric opportunities, and ride major Themes before Wall Street catches on.

Table of Contents

H.E.A.T.

Nvidia’s “Inference Pivot” is the loudest quiet signal in AI

For the last three years, AI investing has been sold like a moonshot: train bigger models, buy more GPUs, repeat. And Nvidia became the “picks and shovels” king of the gold rush.

But the market is changing the question. It’s no longer “Who can train the smartest model?” It’s “Who can run it cheaply, instantly, and at insane scale… without blowing up the power bill?” That’s inference — the moment a model actually answers a user, generates code, drafts a report, or runs an agent. And inference is where AI becomes a business instead of a science project.

That’s why this Nvidia move matters: reports indicate Nvidia is preparing a new inference-focused system that even pulls in Groq’s inference architecture — and OpenAI is positioned as a major customer. Translation: even the GPU emperor is acknowledging that the next bottleneck isn’t just training FLOPS. It’s inference efficiency, latency, and watts. And when the market leader starts redesigning the stack, it usually means the old stack is getting attacked.

Nvidia already signaled this direction in other ways: it has been pushing CPUs harder (Grace/Vera), including standalone CPU deployments for certain “AI agent” workloads — i.e., cases where GPUs are expensive overkill. And it has reportedly inked a deal that licenses Groq’s inference technology and brings Groq’s founder/CEO (and other execs) into Nvidia, underscoring how seriously Nvidia is taking the inference arms race.

Why inference changes the economics (and the stock market)

Training is a giant, lumpy capex event. You buy the machines, train the model, brag on stage.

Inference is a 24/7 utility bill.

It’s the cost of every answer your AI gives.

If AI agents really spread through the enterprise, inference becomes the “new internet traffic.” Not one big build — a permanent throughput problem: latency, memory bandwidth, networking, storage, and power. The winner isn’t just the company with the biggest chip — it’s the company that can deliver the most useful tokens per watt per dollar, reliably, at scale.

And here’s the uncomfortable twist: the biggest buyers of AI compute (hyperscalers) are also the companies Wall Street loved for being asset-light, cash-flow machines. Now they’re becoming capital-intensive infrastructure operators. That puts a spotlight on a question nobody could answer in 2023:

“Where does the ROI show up?”

If ROI is slow, CFOs do the only rational thing: they squeeze costs. And inference is the biggest “squeezable” line item in the AI stack. That’s why customers are diversifying away from one-chip-to-rule-them-all dependency. OpenAI, for example, has been expanding compute options and capacity — including major commitments for AWS capacity powered by Trainium chips. OpenAI has also pursued capacity with alternative compute providers like Cerebras.

So yes — this Nvidia inference pivot can be read as bullish (new TAM!), but it’s also a tell:

the compute customer is getting serious about price, performance, and power.

Winners: “Token tollbooths” and “inference plumbing”

If you want to frame the “inference era” like an investor, forget who has the coolest demo. Focus on who gets paid when inference scales.

Likely winners (stocks)

Core inference silicon / custom compute

NVDA — Still the center of gravity, if it successfully extends its moat from “GPU dominance” into “full-stack inference factory.” The Groq tie-in screams: protect the perimeter.

AVGO (Broadcom) — The quiet kingmaker in custom silicon and networking. If hyperscalers keep building specialized inference hardware, Broadcom tends to be in the room.

AMD — The “credible #2” that benefits from any broadening beyond one vendor. If inference spend becomes more price-sensitive, share becomes more contestable.

The stuff inference devours: memory, networking, storage

MU (Micron) — Inference isn’t just compute; it’s memory bandwidth and capacity to keep models fed.

ANET (Arista) / AVGO — Every token is data moving through networks. Inference scaling is a networking story.

WDC / STX — If more inference means more retrieval, more logs, more model versions, more data… storage demand doesn’t go down.

Power & cooling: the unsexy constraint that decides who wins

VRT (Vertiv), ETN (Eaton) — Power distribution and data-center infrastructure.

PWR (Quanta Services) — Grid buildout and transmission work is the “real world” bottleneck trade.

GEV (GE Vernova) — Turbines, grid gear, electrification exposure.

If inference is the future, the market doesn’t just need chips — it needs electrons.

Losers: “Inference margin compression” and “AI capex hangovers”

This is where you can build a short watchlist that isn’t just “short anything with AI in the deck.”

Likely losers (stocks to scrutinize hard)

AI capex stories where the ROI is fuzzy

Cloud/capex-heavy narratives can get punished if growth is real but margins compress — because the bill arrives before the payoff. (This is where the market starts sniffing around debt and free cash flow instead of PowerPoints.)

Seat-based software models that don’t control the outcome

If the AI labor narrative proves even partially true, “per-seat” pricing models face a headwind: fewer employees → fewer seats → slower top-line growth. Names that can’t pivot to usage/value pricing could get de-rated.

Infrastructure middlemen with weak moats

Any “rent-a-GPU” or “me-too inference host” model is vulnerable if hyperscalers and top labs push costs down using custom silicon and direct supply.

And the “sneaky” loser category:

anything priced like it will capture infinite AI growth while ignoring power, latency, and customer bargaining power. Inference puts the customer back in charge.

The bottom line

The easiest way to understand this moment:

Training is building the brain. Inference is running the business.

And Nvidia’s move into inference-specific systems is the market leader telling you the next war is about speed, cost, and power efficiency — not just raw compute.

The AI race isn’t ending. It’s maturing.

And when a tech theme matures, the biggest money moves from hype… to unit economics.

News vs. Noise: What’s Moving Markets Today

Yesterday played out as planned. Stocks dip, oil rips, and then the market ended green. Today is starting off ugly, and I would expect that it stays that way unless we get something new. As I said yesterday, keep an eye on oil prices, that’s the best tell for where stocks should go.

There is still a lot going on in the market, but for now it will all be obscured by the back and forth in the war. As always, make sure you have hedges in place.

Two of the most interesting areas in the market lately have been photonics and memory. Photonics stocks ripped yesterday on news that NVDA will invest $4bn into LITE and COHR. These have both been featured here as a Stock I’m Watching, and are in UFOD. This area is extended, and these stocks are selling off so far this morning.

As far as memory names go, what I talk about above could have an impact. Groq relies on Static Random Access Memory (SRAM) not DRAM and HBM. That could cause a reaction in these stocks. These are also selling off hard this morning.

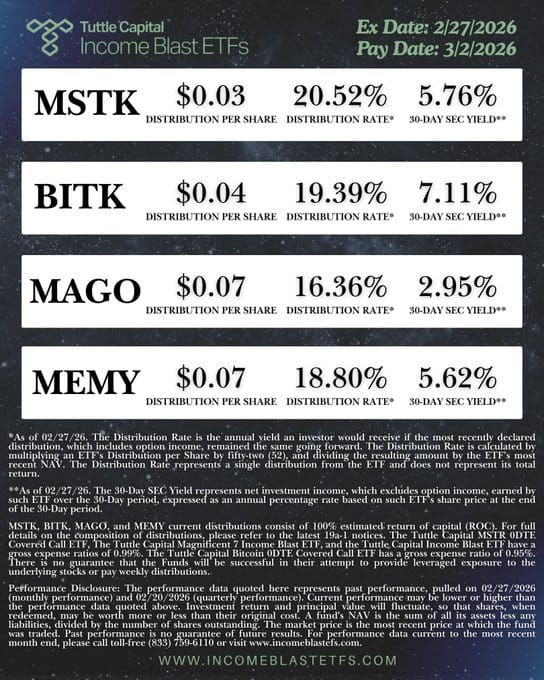

ETF News

A Stock I’m Watching

OUST is one of the cleaner “physical AI” ways to play autonomy: it sells digital LiDAR sensors that act as the eyes for robots, industrial automation, mapping, and increasingly defense/UAS workflows. The bull case isn’t “AI hype” so much as forced spend: once customers decide to automate warehouses, perimeter security, ports, rail yards, or drones, they have to buy reliable perception hardware and the software layer around it—LiDAR is a gating item. In its latest update, Ouster highlighted product revenue around $41M and total revenue around $62M (including royalties), with ~8.1k sensors shipped and ~$177M in product bookings, then guided the next quarter to ~$45–48M revenue with ~28–30% gross margin as it integrates StereoLabs (which it says closed in early February 2026). The “why now” catalyst that matters for a UFOD-style sleeve is defense credibility: Reuters reported Ouster’s OS1 being added to the DoD’s Blue UAS Framework, which is the kind of compliance/approval milestone that can unlock program-level demand vs. one-off pilots. The key risk is still classic small-cap hardware execution: competition and pricing pressure can be brutal, and even with demand, margin/FCF can lag if capacity, go-to-market, and software investment ramp faster than revenue.

The stock is currently up over 16% in the premarket and would open above the 50 day. Bulls would like to see it break back above the 200 day as well.

In Case You Missed It

Talking with Josh Brown about European Digital Sovereignty and European Defense….

The H.E.A.T. (Hedge, Edge, Asymmetry and Theme) Formula is designed to empower investors to spot opportunities, think independently, make smarter (often contrarian) moves, and build real wealth.

The views and opinions expressed herein are those of the Chief Executive Officer and Portfolio Manager for Tuttle Capital Management (TCM) and are subject to change without notice. The data and information provided is derived from sources deemed to be reliable but we cannot guarantee its accuracy. Investing in securities is subject to risk including the possible loss of principal. Trade notifications are for informational purposes only. TCM offers fully transparent ETFs and provides trade information for all actively managed ETFs. TCM's statements are not an endorsement of any company or a recommendation to buy, sell or hold any security. Trade notification files are not provided until full trade execution at the end of a trading day. The time stamp of the email is the time of file upload and not necessarily the exact time of the trades. TCM is not a commodity trading advisor and content provided regarding commodity interests is for informational purposes only and should not be construed as a recommendation. Investment recommendations for any securities or product may be made only after a comprehensive suitability review of the investor’s financial situation.© 2026 Tuttle Capital Management, LLC (TCM). TCM is a SEC-Registered Investment Adviser. All rights reserved.